You’ve probably heard the phrase “content is king” at some point in the last 10 years. Its been used as the mantra for everything from TV programming to website building - and meant to remind those in marketing or advertising that what people want - what matters - is the content, the story, the words on the page. The current hot topic - the Justin Bieber of the business world - is Big Data. Big data is all about content. Lots and lots of content, delivered every second of every minute, all day, everyday, everywhere. Big data is an enormous mountain of data that the business world is still in the early stages of figuring out what to do with.

You’ve probably heard the phrase “content is king” at some point in the last 10 years. Its been used as the mantra for everything from TV programming to website building - and meant to remind those in marketing or advertising that what people want - what matters - is the content, the story, the words on the page. The current hot topic - the Justin Bieber of the business world - is Big Data. Big data is all about content. Lots and lots of content, delivered every second of every minute, all day, everyday, everywhere. Big data is an enormous mountain of data that the business world is still in the early stages of figuring out what to do with.

But what’s missing from big data analysis today is context. In the world of big data, context, not content, is king.

No one loves to play with data more than I do, the more data the merrier, but I have come to learn that simple insights delivered at the appropriate time and place often generate the best results. The press has jumped on the bandwagon of big data as the future of business. Business has been dealing with large and complex data situations for a long time. The evolution of computer processing speed and data storage size and cost has allowed for more and more data to be analyzed faster. My concern is that the goal of data analysis should not be merely to understand the numbers, but to understand the behavior that causes the numbers in order to affect change. That understanding is often complicated by complex answers instead of simple answers delivered at just the right time. In deciding the tradeoffs of the complexity of understanding big data, and the simplicity of smart data, relevancy and context are the keys to success.

Since the beginning of time, people have recorded events like the motion of the planets and goods transactions to provide an understanding of behavior of nature and people. This understanding led to better decisions like “when should I plant” and “how much am I owed”. The complexity of the motion of the planets was not important to the farmer, all he needed to know was: “Should I plant this week or next?”

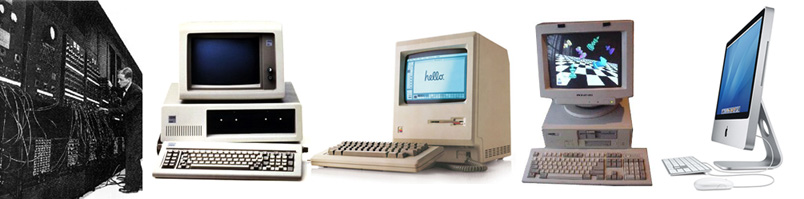

The amount of data recorded and the ability to process it has grown over time and accelerated in the last few decades. During the last 30 years, the price of a Gigabyte of storage has dropped from $300,000 to less than $.10. During the last 20 years we have seen the price of computing fall dramatically to where an iPad2 is faster than any computer built before 1994! This dramatic change has put performance in the reach of most anyone

To exploit this evolving capacity and need to handle expanding big data, companies like SAS were formed (1976). This expanding capability was often applied to answer questions like those above. “When should I plant?” The origin of SAS was just that case, processing the growing agriculture data being collected. While the understanding and testing of theories was valuable, the big result to the farmer was a timely answer to a simple problem, “should I plant?”

Now we have a formalized Big Data emphasis and there is concern from the experts that we are losing our way and potentially worrying about the wrong problems. Danah Boyd (Microsoft) has raised concerns about the use of big data in science neglects principles, such as choosing a representative sample, by being too concerned about actually handling the huge amounts of data. Chris Anderson (Wired) has asserted that big data will spell the end of theory.

I agree that we are doing more analysis, but generating less understanding.

We often worry about the mass of data and it prevents us from seeing the obvious, or at least the simple insights, that provide great value. The thermostat in your house doesn’t need to be very accurate. It doesn’t need to tell you about the barometer in each room or about the status of El Nino, it just needs to turn the heat on when it is cold.

A simple model with timely answers is valuable.

Providing small insights at the right time is what we need. Knowing about traffic in general doesn’t really matter to you, you just need to know do I turn left or right, now, to get to work on time. You don’t care about the specifics of why one route has become inferior.

Major retailers know that when someone walks in their door, it is far more likely today than 10 years ago that they know about products and prices from research done online and through social media. This information will reveal what the consumer is interested in. While information from online/offline and social sites will provide more information the retailer needs, we need to be careful not to get lost in that data. We may only need to know that they just walked in the store 1 minute ago and they just Googled Christian Louboutin shoes 5 minutes ago and they recently did a Facebook checkin from their biggest competitor down the street 20 minutes ago. The sales person can use this information to facilitate a sale, NOW. Sending them an email or direct mail, will lose the opportunity of time. In this instance, its simply enough to know as they walk in the door, who they are and what they have just done in the past. Little insights from big data at the right time should be the goal. We see this as true with the new Foursquare release which provides the simple insight of where people are likely to go next based on their current check-in. So again, its more importantly about context –understanding when just a little bit of highly relevant data is better than the sea of big data. With simple insights at the right time, you don’t need to know a lot. You simply need to know when you need it.

Major retailers know that when someone walks in their door, it is far more likely today than 10 years ago that they know about products and prices from research done online and through social media. This information will reveal what the consumer is interested in. While information from online/offline and social sites will provide more information the retailer needs, we need to be careful not to get lost in that data. We may only need to know that they just walked in the store 1 minute ago and they just Googled Christian Louboutin shoes 5 minutes ago and they recently did a Facebook checkin from their biggest competitor down the street 20 minutes ago. The sales person can use this information to facilitate a sale, NOW. Sending them an email or direct mail, will lose the opportunity of time. In this instance, its simply enough to know as they walk in the door, who they are and what they have just done in the past. Little insights from big data at the right time should be the goal. We see this as true with the new Foursquare release which provides the simple insight of where people are likely to go next based on their current check-in. So again, its more importantly about context –understanding when just a little bit of highly relevant data is better than the sea of big data. With simple insights at the right time, you don’t need to know a lot. You simply need to know when you need it.

One thought on “Big data, and why context is king”

Comments are closed.